HIGHLIGHTS:

October was mainly focused on physical tasks around our house and land.

However, I did also move the object storage for Gitea and PeerTube from AWS to Backblaze, and I did some work on the PLSS prop for Lunatics.

PRODUCTION:

Worked a little bit more on the PLSS asset for Lunatics, creating specific messages for the digital display, and moving it over to the PLSS prop file.

Published Morevna Project’s animated version of Pepper & Carrot, episode 3, “The Secret Ingredients” as a “Premiere Host” on our Film Freedom PeerTube.

IT:

High bills alerted me to large amounts of egress usage for my AWS-S3 account, driven by search indexing bots hitting my Gitea archives.

I then did an extensive search for more cost-effective object storage providers, settling on Backblaze as the best fit.

I created a Backblaze B2 account for S3 storage and migrated Gitea and PeerTube storage to it using rclone.

I also added a robots.txt to tell the search bots to leave the archives alone.

I also considered whether I would be better off to move to a co-hosted, self-maintained server, rather than using cloud services. Decided against it in the end, though.

Received a substitute monitor from Philips to replace the damaged one.

BUSINESS:

Spent some time learning and applying “financial modeling” to our proposed business model for Lunatics Project. Haven’t reached any solid conclusions, yet.

Got a notice that our sales tax license might be revoked due to a lack of reported taxable sales. I am not sure what to do about this — perhaps try to organize some local sales event?

October Projects

Speedramp Beat Experiment

I decided to simulate a “speedramp” effect by cutting a clip and adjusting the playback speed to convert a smooth movement into a rhythmic movement matched to the beat of a piece of music. I took some footage of my daughter running across the sand at White Sands from our vacation there, and applied the effect by stretching one segment and compressing another in an alternation matched with the timing of the music track. It worked out pretty well.

Here’s the resulting video:

Premiere of Animated Pepper & Carrot, Episode 3!

I got a nice chance to test our Film Freedom PeerTube, by hosting the premiere of an animated episode of “Pepper & Carrot”. Makes a nice test run for releasing “Lunatics!”

I decided to go ahead and host all of the available animated episodes, which can be found as a playlist on our Film Freedom Channel:

Blender 3.3

As you know, Blender is now on version 3.3, which is a long way ahead of the 2.79 version that I have been using on Lunatics. It is my intent to catch up on a later episode. I’m not sure exactly when would be best to do it. But the further I get behind, the more pressure I feel to do it sooner — so I might decide to do the transition in episode 2.

To soften the blow on the production process, I may use existing 2.79 files and assets that are already developed, and use compositing to combine with the new elements.

Presumably, it will be easiest to do the compositing in the later version of Blender, so it’s likely that the compositor is the first use I’ll make of it. Indeed, there’s probably no reason not to do the compositing on episode 1 using the later software.

Well… except for one: my “ABX” extension package isn’t compatible with later versions of Blender… yet. So that’s on my to-do list, now.

Gitea, S3, and the Bots…

I’d heard of the problem with exploding Amazon Web Services (AWS) bills before, but I was still surprised when my September bill from Amazon jumped from under $2 to over $45!

Gotcha

What happened? Apparently, after being up for a couple of months, my Gitea repository for Lunatics was discovered by search engine bots, and they started indexing it.

By default, Gitea has no robots.txt file setup, so the search engines follow every public link — including the “View Raw” links that Gitea provides to access the Git LFS media content (which is actually stored via the AWS S3 object storage service).

This probably wouldn’t be an issue for most software projects hosted on Gitea, unless the proprietor wanted to keep their source code private (and Gitea does allow making a repository private, so only those who are logged in and have access can see it).

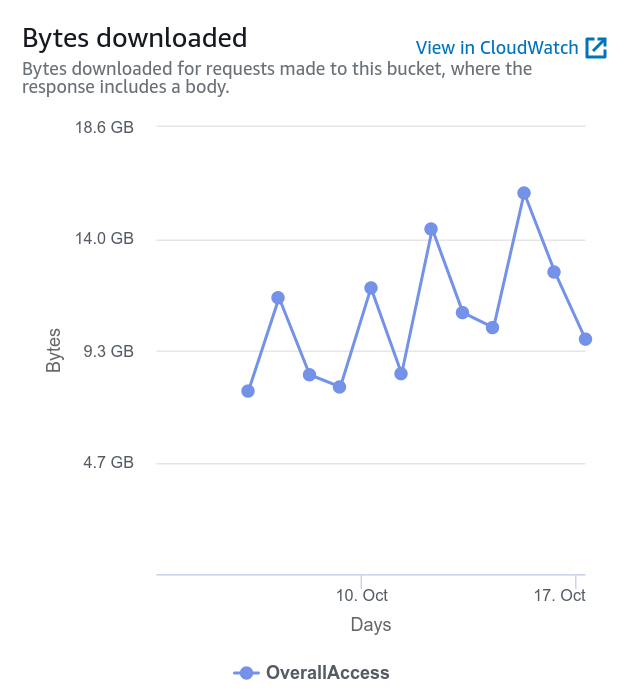

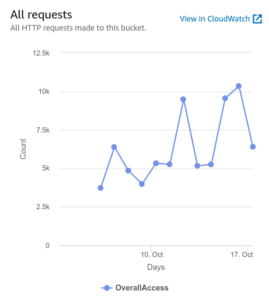

The result was data transfers out of S3 running as high as 15 GB a day!

I don’t know if this would’ve continued (perhaps the bots would eventually be sated in their hunger), but it’s clearly not a good thing for the planet, and expensive for me!

I spent some time tracking down what exactly was causing the S3 charges. My initial thought was that perhaps more people were watching my PeerTube videos, but I found that the culprit was Gitea, from the metrics charts, like the one above.

Solving the Problem

After figuring out what was probably going on, I temporarily made all of the large repos private, in order to figure out my strategy. And then I pursued two avenues to correct the problem.

Cheaper Storage?

First, I found that there were other object storage services that would not have charged so much for the “egress” data transfers. This is probably important in the long run for PeerTube, more than Gitea, though it would obviously help here.

After a survey of my options, I settled on getting an account with Backblaze. They charge a minimum monthly fee of $5, which is more than I was paying Amazon before this incident, but they charge a great deal less for both data transfer and storage, beyond that. My estimate is that I can expect bills to be between $5 and $8, even for vast amounts of traffic, which contrasts with the way the Amazon bill would shoot up if my PeerTube or Gitea became heavily used.

Migrating to the new service was easier than I expected, mainly due to rclone: a free-software utility for copying data between a wide variety of data sources, including all the S3 options. The right way to do this is to install it on a well-connected server, as it needs to transfer data from the source to the computer it is running on, and then onto the destination (so running over a home internet connection might not have enough bandwidth).

However, even if I wasn’t being crushed by the bill, all that data transfer would be wasteful, if it’s not really being used.

Reducing the Traffic?

So, second, I investigated how I might encourage the bots not to download all those LFS files. A query to the Gitea Discord channel brought up the suggestion using robots.txt.

I’ve always regarded robots.txt with a bit of skepticism, since there’s no enforcement of the rules, and it is (wrongly, IMHO) based on “opt-out” rather than “opt-in” (which means the file itself tells a potential attacker where to attack). This is of course, thinking of the search engines as adversaries.

However, this is actually doing them a service as well: there’s not much to be gained for search engines to download the large media files. They can’t do much with them, anyway, and the summary page contains the relevant information for web searches.

It turns out that adding a robots.txt file is as simple as putting the file in the “custom” / “CustomPath” directory for your Gitea installation, and the following robots.txt file appears to do what I needed:

User-agent: * Disallow: /*/media/*

This matches all of the links going into the LFS S3 storage from Gitea. I was able to verify this using a robots.txt validator.

Will It Work?

Having completed both of these changes, only time will tell if I’ve successfully dealt with the problem. I’ll need to run with Backblaze awhile to reassure myself that it’s stable enough, but I’ll likely transfer my other S3 data over to it and close out the AWS account. Since I’m already paying for a terabyte of storage with the $5/month minimum, this won’t cost much of anything, and it’ll eliminate the bill to Amazon.

But I want to be sure before I do that.

As for whether the bots play nice with the new robots.txt in place, I’ll have to keep an eye on the statistics from the new site (once I find out how to get them).

But either way, it’s clear the transfer charges from Amazon would be a problem if my PeerTube site takes off as I hope it will. It would be silly not to plan for that.