HIGHLIGHTS

This month, I worked almost exclusively on development of our new project website, a.k.a. “virtual studio”, based on my recent discovery of “YunoHost”, a package system for web applications, targeted at small-time self-hosting projects, such as ours!

DEVELOPMENT – VIRTUAL STUDIO PROJECT

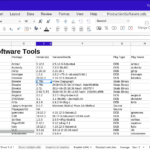

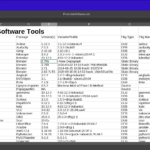

I decided, based mainly on my discovery of YunoHost, to go for a quicker and easier solution for the virtual studio that I could have working this year. I dedicated about three weeks of this month to installing and reviewing the available YunoHost “apps” to determine what I have available to build the new site, and the viability of each of them for my needs. I selected the following as promising apps that I will develop for use on our site:

PeerTube Gitea WordPress Nextcloud Collabora DokuWiki ArchiveBox Vikunja Misskey SVG-Edit Mumble Galene Invidious Monitorix Webmin

I will be retaining some of my current infrastructure:

WordPress ResourceSpace

But both will be new instances. It’s not clear yet whether I should install ResourceSpace with YunoHost, or install it from scratch on another droplet. I still have more evaluation work to do on:

Shiori Shaarli Galene Mumble Abantecart Peppettes ‘Custom App’

It appears that the ‘Custom App’ is the right way to install static websites with YunoHost, and it may also be able to handle basic LAMP installations — perhaps enough to install Resource Space using it. Alternatively, there is documentation for packaging, and I think it might be feasible to do that for Resource Space. I am tabling the research on TACTIC and/or Prism-Pipeline for later research. The new solution will continue using WordPress as a basic CMS and blog, though features originally intended for releases will be removed (taken over by the LunaGen release site).

The source control will be moved to Git / Gitea, from the old Subversion / Trac solution. Further details will be worked out in March, along with the actual building of the new site.

DOCUMENTATION

Finished up the 2021 Annual Report and got it printed by Lulu.com as a book (it’s about 150 pages, including the four annual reports and the 12 monthly summaries). This only took a couple of days to lay out, using pelican-import to migrate from WordPress to RST (or MD) and pandoc to generate a PDF, which I then could submit for printing. A very streamlined way to make a book!

MAINTENANCE

Repaired and braced the front door on our house.

Project Re-engineering with YunoHost

They say that “Life is what happens while you’re making plans.”

The last major shake-up of my project architecture was probably in 2015, when I switched from Plone to WordPress for the site, but even then and even now, I was still just using the the Subversion setup I had put together in 2012.

As you know, I’ve been considering building a better system for a long time. Since 2013 or so, I’ve been focusing on TACTIC as the best method for that, and I may yet get it working, but it’s clear that I just haven’t got the time to work on it.

So, it’d be nice to get some system working that is better than what I’m using, and yet faster, to get working.

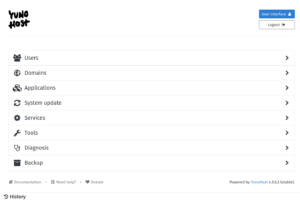

YunoHost

Late in 2021, I learned about the YunoHost project, which is an open-source package management system for web applications, built on top of Debian.

The promise of this package is that installation of web applications becomes much easier, so you don’t have to choose between the elaborate and usually poorly-documented process of installing the software, and just giving up and using third-party (and often proprietary) commercial hosting services.

This also has another benefit: it becomes pretty quick to install packages and test them out to decide what to use, rather than having to decide based on descriptions and what other people are using. It makes it quicker to “shop” for the solutions you want.

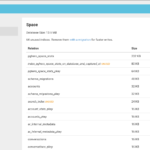

So that’s what I’ve been doing for the last ten days. I installed YunoHost (4.3.6.2 (stable)), and started trying out the “apps”.

So Far, So Good

By today, I’ve gotten through more than 45 packages. I am completely sold on YunoHost itself. This is so much faster than what I had to go through before to test out applications!

Many familiar applications are packaged, as well as some I’d never heard of before.

Of the 45 packages, no more than 3-5 failed to install. Some of the others present awkward interfaces or requires stuff to be done after installation that I haven’t attempted, yet.

But most of the apps I tried worked very quickly, whether I wanted to keep them afterwards or not.

It’s a little soon to write about a my new site architecture, but I can list the trouble spots with the old site that I’m hoping to fix, and briefly view applications that stood out to me as promising.

Trouble Areas

Overall

- No single-sign-on for contributors

- Not much engagement at all

- Hard to contact us

- No guest file upload or contribution point

WordPress (Blog & CMS)

- Permalinks have never worked right

- No HTTPS

- Overbuilt as Blog

- Unintuitive as CMS

- Vestigial release pages (have another site for this, now)

- That image/video carousel might not be legitimate

- Probably have trackers from social media plugins, etc.

- A lot of weird formatting still left over from migrations

- Security risks if it’s not updated regularly

Subversion/Trac (Version Control)

- Self-signed HTTPS only for Trac

- Huge/slow repo

- Dependency on central site

- Software and multimedia projects in different places

Resource Space (Asset Management)

- No integration with file-system collections

- No integration with version control

- Relatively empty

Project Management

- In my head or offline documents

Financial and Organizational Documents

- Offline, generally not published, or published erratically

- No actual system for paying contributors if we do get paid

- Storefront is Gumroad

- Membership/subscriptions are via Patreon only

- No tracking of funds to indicate how close we are to goals

Behind-the-Scenes Tutorials

- Old ones on personal YouTube

- False Start Channel on YouTube

- Low-volume Vimeo Site

- Poor organization for browsing

Interesting Packages with YunoHost Apps

These are just a collection of packages that I found promising from my survey of available packages for YunoHost.

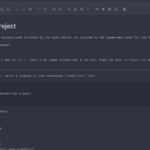

WordPress

YunoHost currently packages WordPress 5.8.3, whereas I have have 4.7.22 installed currently. Significantly, the newer WordPress has a new layout engine associated with the “Block Editor” which is a significant change. My existing custom WordPress theme (“Lunatics Spacehub”, contributed by Elsa Balderrama in 2015), does not work with the new version.

However, I did test migrating posts from the old installation to the new with export and import, and that worked.

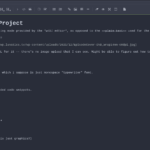

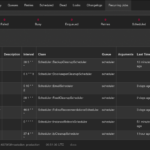

Gitea

I also looked at GitLab, but Gitea seems like the better fit, to me. It’s more streamlined. Looks a bit more like GitHub, which I’m used to. And the design is fairly compact.

I’ve never used most of the extensions that GitHub provides, so what’s missing is probably not stuff I’m going to miss.

On the other hand, I was able to quickly find documentation for the features I care most about:

- Markdown/Wiki Integration

- Large File Service (LFS) support

- S3 Backend for LFS data

As with GitHub, Gitea provides nice integration of wiki features, using Markdown syntax. A file named “readme.md” gets automatically rendered with views of a code directory, like so:

Of course, this would mean migrating Lunatics Project out of Subversion and into Git. I think this is probably a good thing to do at this point. I’ll have more to say about that, later.

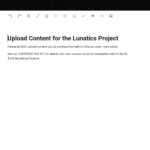

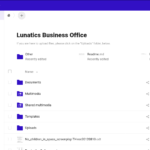

Nextcloud

This is a cloud storage platform that works very well. It could be a very good solution, both for making business documents available and for accepting uploaded contributions. I’m not quite sure about the authentication and permissions I would need for the latter to work smoothly, but I think it’s probably feasible.

Collabora Online

Collabora is basically the LibreOffice suite ported to the Nextcloud platform and operation in the browser. It can handle the full range of ODF files and interactions, editable in the browser, with an interface that is not exactly like the native LibreOffice tools, but similar. Collabora uses “LOKit”, from the LibreOffice project to do this.

MediaWiki, DokuWiki, Wiki.js

There are a variety of Wiki packages represented. The most promising to me was Wiki.js, because it apparently uses Markdown internally — which would mean the pages would be in a compatible format with other tools, like Gitea. Unfortunately, the YunoHost package is apparently broken in some way – or else I missed a step, somewhere. I’m unable to set up and initial user, and when I try, it just starts returning “502 Bad Gateway”. A little poking around in the documentation suggests that maybe node.js is crashing?

MediaWiki is one I’ve used before, and I’m not excited about setting it up again. It’s overbuilt, a lot of trouble to keep up with, has few protections against getting spammed, and is a bit of a prima donna — it really wants to be your main site.

DokuWiki promises to be much more low-maintenance and would work well as a tie-on element for a project. It was easy to install and easy to use. I enjoyed using it for the test. And I really like that the pages are just a collection of text files on disk. My only real problem with it is that it uses its own custom markup language, which is not a simple dialect of Markdown nor compatible with MediaWiki.

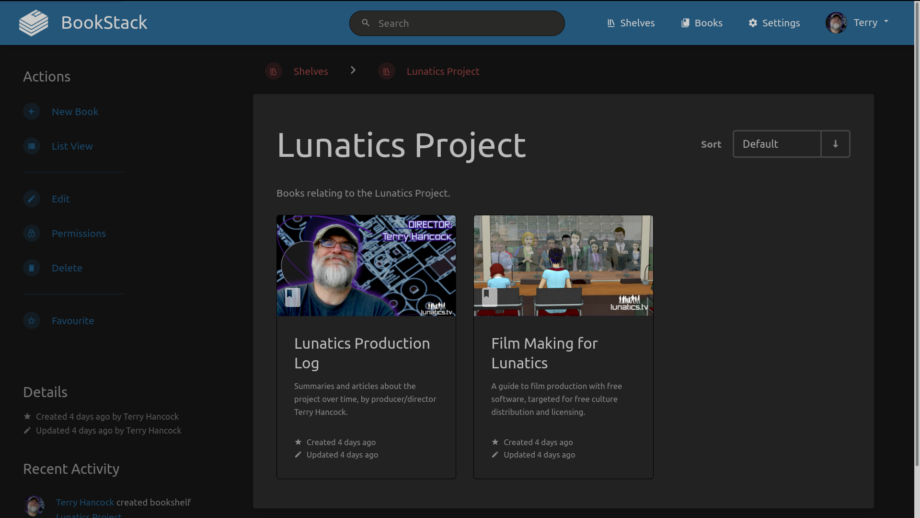

Bookstack

This is really interesting alternative. It is basically a wiki, although it could also be considered a CMS. The files are said to be stored in Markdown format, though this is a little hard to tell, because the interface is a wysiwyg editor. And the site is setup to organize things as “Books” on “Shelves”.

That’s a very appealing metaphor for me, especially for larger works.

The only problems I see are with getting data in and out of it – I was unable to find any import, export, or migration options. Without them, it’s a lot less useful to me. And while it claims to use Markdown for documents, I can’t find them stored on disk, suggesting they are in a MySQL database, which makes them a little harder to get to (had they been stored on disk, as with DokuWiki’s pages, that might have offered a simple import/export option).

I’m wondering if perhaps this software is just very immature and hasn’t had these tools written for it yet?

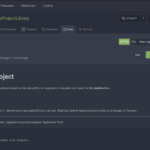

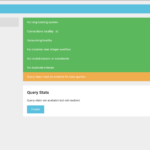

Vikunja

I looked at several different “to do list” and “kanban” applications, and this one seemed pretty user friendly.

It has multiple ways of viewing the same “tasks”, as well as mechanisms for relating the tasks to each other (such as “task A has to be done after task B”).

The only thing that gave me pause was realizing how much time might be required, just to fill it up with items to do. Still, if I’m going to do that, this looks like it could be a good way.

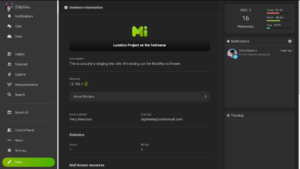

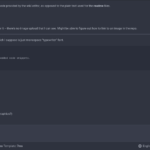

Misskey

I installed Mastodon, Pleroma, Epicyon, Hubzilla, and Misskey as potential Fediverse (ActivityPub federated) servers, but the only one I could easily setup and immediately get working through the web was Misskey. It also has a cute design aesthetic and a very nice dashboard. I might just stick with it.

I probably will try to get Mastodon and perhaps the others installed to do a proper comparison, since I’m set up to do that, but I’m leaning towards just sticking with Misskey. One nice thing about the Fediverse is that it doesn’t matter so much which server you choose, because you can change later if you want to, without losing your Fediverse identity.

Hosting a Fediverse instance also introduces some other possibilities, like integrating it with the blog to provide a commenting feature that’s visible to social media users.

PeerTube

PeerTube is the federated answer to YouTube, Vimeo, and other video hosting sites. It solves the bandwidth problem for video streaming by setting up a swarming stream when you view.

PeerTube was the most obvious advantage I hoped to get from YunoHost, and was the first piece of software I installed using it. It’s pretty complicated, and has a lot of features that I find really interesting. Some of it feels a bit “raw”. Theming it is not well-documented, as far as I can tell, and so integration may be fairly complicated.

The only really serious problem with it, relative to using a commercial video hosting service, like Vimeo, is performance. It’s difficult to get through a video on PeerTube without it stuttering and stopping to buffer. This is true, even if I boost the size of my Digital Ocean droplet VPS up to a much higher service level than I can afford — certainly so, if I only increase it by the amount that it costs me to use Vimeo.

On the other hand, the swarming download system means that if the videos I put up are replicated by other PeerTube instances, the performance may become entirely acceptable.

And there is a much greater level of control over the content when I’m managing it on my own server.

One problem that might arise is storage (videos take up a lot of space!). There is some documentation about moving some of that into S3 storage, but I’m not entirely clear on how that will work or whether it can stream directly from S3 storage. That’s one of the things I hope to do further tests on.

Webmin

Webmin is an old standby. I was using this to manage my VPS at Rose Hosting in the 2000s. It allows you to do a lot of common Linux configuration tasks through a web interface. This is probably the easiest method to manage things like static-hosted websites on a YunoHost server.

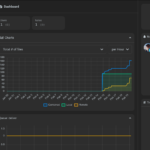

Monitorix

As the name suggests this is a monitoring dashboard to see how your server is doing. I like the available graphics a lot.

NetData

Another monitoring dashboard. Not sure which I like better.

SVG-Edit

This is a “digital whiteboard” application using SVG. I found this to be better than Whitebophir, because it has more drawing tools readily available, and in particular, because I can import images. The most important use-case for a whiteboard for me would probably be marking up stills from animation shots or screen-captures from Blender.

The only disappointment is that it says it’s “not maintained”. I don’t know if that means the application itself, or the YunoHost installer app for it. Also, when you add an image, you have to use a URL – I haven’t found an upload option, so you’d have to upload it into one of the other applications, first, and reference it there.

ArchiveBox

A mini “Archive.org” of your very own. Actually, it’s fairly different, but it archives snapshots of external websites using various methods (presumably because different methods will work better for different websites).

What’s Missing

Of course, YunoHost doesn’t have everything. And unfortunately, none of the DAMS solutions I’ve been using or considering are included:

- Subversion

- Trac

- Resource Space

- TACTIC

- Prism-Pipeline

This is unfortunate. Instead, going with YunoHost probably means switching to Git, which is better than Subversion in many ways, but far from the ideal system I was striving for.

On the other hand, I have a notion that I can use the “Webhooks” feature in Gitea or a filesystem crawler, along with a web API for Resource Space, to scan for new files and check them into the DAMS automatically.

I think that’s a pretty simple script to write. It means I’ll have to learn the Resource Space web API, and also that I’ll have to do a fresh install of Resource Space, configured to index existing files, rather than store them separately (I think this is a much better way to use it).

Presumably I could use a similar method to migrate to TACTIC in the future. But if I can write one, I should be able to write the other.

Also, of course, if I get deeply enough into this approach, I might write the YunoHost packages for any of these other applications myself.

In the meantime, I will just install ResourceSpace separately, using Ansible, as I have been doing.

Pros and Cons of YunoHost

I feel a little bit like a man who has been living in a tent for 10 years, in order to save money to build a really nice mansion. And after awhile, you have to start wondering if maybe it’d be worth moving up to, say, a trailer home, as an intermediate step!

The YunoHost approach to setting up my project is essentially bundling together several separate micro-services to get a system I can use. It will never be as integrated as a single project management suite, but on the other hand, it should be much easier to set up.

At first, I figured that I’d be giving up on the Ansible approach to setting my sites up in a completely repeatable way, but it may be possible to Deploy YunoHost with Ansible, so I might be able to make a playbook for it, afterall (although that project is a little out of date!).

There are a lot of individual design problems and decisions to be made, which I’ll address in separate articles. For this week, I’m just considering the options.

The cons to this approach are:

- Giving up on (or delaying) my integrated DAMS/VCS development

- Possibly less automated than deploying with Ansible

The pros are:

- Faster to get it set up than alternatives

- More familiar tools for a lot of potential contributors

Given that I would like to get the project back up to speed THIS year, those are pretty important pros. So, I think I’m pretty much settled that I’m going to do it this way, and get something set up in the next month or so.

The next challenge is to figure out exactly how I’m going to connect it all, and migrate from my present setup.

SELECTED COMMENTS:

BTW: earlier today, I learned that DokuWiki DOES support Markdown and reStructured Text, via plugins. That’s probably going to make it the practical choice for me (unless I just rely entirely on Gitea’s integrated wiki pages, which use Markdown). — Lunatics Project

Another weird thing: a couple of days later, and I CAN log into Mastodon. Did it take that long? Did I somehow not enter the correct username/password (I really thought I did — the obvious ones worked). I don’t know what happened there. But it’s nice I can look at the admin interface, now. — Lunatics Project

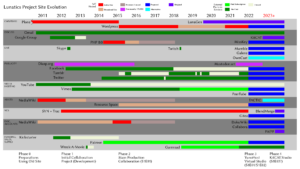

Lunatics Project Site – Past & Proposed

I’ve pretty much finished with my evaluation of available YunoHost web applications, and I’ve moved on to designing and planning upgrades to the project site. This summarized in the timeline above (I’ve attached the original full-resolution PNG file below, for better readability).

To get some perspective, I also did a little looking backward, to see how I had solved problems in the past.

Essentially, I can break this chart up into 5 phases:

Phase 0: < 2012

This was what I had set up when I was writing for Free Software Magazine, and I just quickly cobbled together some components (mainly MediaWiki) to accommodate a new project.

Phase 1: 2012-2014

I introduced a version control system with Subversion and Trac, and this became the initial system we used in 2012-2014. It was still heavily tied to my legacy Plone installation. This was what we had during a lot of the initial series development work.

Phase 2: 2015-2021

With the help of Elsa Balderrama in 2015, I switched the site over from Plone to WordPress, and migrated a lot of posts from Kickstarter and the old Plone blog to the new site. This was the setup we had when I started working in earnest on “S1E01”, the pilot episode, as it’s now defined.

I never got any single-sign-on working. Nor a trusted certificate with “Let’s Encrypt”, and the Subversion software is a bit clunky for users (particularly non-technical) users to work with. It has required a lot of support work.

But it did work well enough for us to get started on producing the assets for our first episode. Since then, I’ve moved on to production of the episode itself, which has taught me a lot about how to organize the source files.

I used to worry that I couldn’t make them make sense in a fixed file hierarchy, but at this point, I think I have a pretty good system of organization.

Phase 3: 2022-2023?

The site I propose to setup with the help of YunoHost applications, most notably Gitea, is an intermediate between what I have now, and my “dream virtual studio” I’ve been talking about, but not making much headway on.

Rather than try to integrate everything tightly around one flagship application, this will be collection of micro-services provided by existing web applications, with a single-sign-on provided by YunoHost.

The notion is that I can quickly build something good enough to finish S1E01 (“No Children in Space”) and then hopefully recruit some help to complete S1E02 (“From the Earth…”). I want to get collaboration to be reasonably painless, and possibly raise funds, using the completed S1E01.

My goal is to have this functioning sometime in March. The main challenges are:

- Migration of blog to new WordPress (easy?)

- Migration of SVN Repo to Multiple Gitea Repos, with Git-SVN & Git-LFS/S3

- Fresh Resource Space Install with External File Pointers

- Writing a Sync Script to Update Resource Space from Filesystem & Gitea

- Completing LunaGen separation & creating a “Lunatics Project” hub

The SVN-to-Git migration is pretty well documented, so I hope it won’t be hard.

The main complication is that I’m going to be using LFS, and I hope to have the LFS storage in cheaper S3 “buckets” rather than on the server filesystem. I’m currently renting S3 space from AWS, but this is an open standard, and it should be possible to migrate to another provider if I want to get away from AWS. It looks to me like Gitea has already anticipated this kind of setup, so I’m optimistic that it will all work together.

Resource Space will have to be installed from scratch, because I need to configure it very differently than it is now, to work as an indexing tool, rather than storing files internally. I want it to have read-only access to the repo used by Gitea as well some filesystem storage (for the music library, which I already uploaded — that’s also in S3, mounted locally via s3fs).

And, of course, to make the most of that, I’ll need to write a script to scan the data sources and check in asset automatically, using the Resource Space API. That’s a little more ambitious, but it is basically just an application of os.walk in Python, if I’m not too fussy about it. I could probably make it a little more sophisticated for Gitea in particular, by adding a “webhook” system to trigger it when the repo is updated.

I’m fairly happy with the Lunatics “Release” site I created with my custom script “LunaGen”, and it occurred to me that I can use it to create a top-level “hub” site for the “Lunatics Project” site as well. This will take some of the burden off of WordPress, and make it less onerous that I’m losing my custom WordPress theme in this migration.

Between Gitea and a fully-populated Resource Space system, this should make our production files much more accessible to people outside of the project.

I was in the process of separating the LunaGen application code from the specific code for generating the Lunatics Release site prior to Christmas break. I’ll have to get back onto that — I was already pretty close. Once fully separated, it’ll be easy to use the program to generate the two different sites (and possibly others).

Most of the other features are just plain installations of existing tools, particularly video-conferencing, and the Collabora/Nextcloud tools for exposing LibreOffice documents online (which will have to do for accounting in this phase).

I’ve decided to implement a MissKey federated social-media intance on our server. This will initially provide a home for our “brand” account. I hope to integrate it as a social-media comment/discussion system. I’ll probably retain my existing personal account on Mastodon.art for the time being, but I might migrate onto my self-hosted site later in the year. Supposedly that’s not hard to do on the Fediverse. I suppose I will find out!

Phase 4: > 2023?

Is this pie-in-sky? Well, maybe. But the point is, I’m not going to try to get this done in 2022, so it doesn’t get in the way of production.

But just for clarity, I’ve listed some of the goals I might want to work on for that site:

- Migrating to TACTIC? Or something else?

- Writing & Adopting the KitCAT Context Manager Tool

- Possibly implementing a BlendMerge utility to fix Blender file version conflicts

- Custom accounting software: “Production Accounting for Free Film”

These are all pretty intensive projects, and it might not really be possible to work on them unless I have some development help. Or unless I get a lot better at programming.

Of course, it’s also possible that the Phase 3 studio will work out so well that I won’t feel the need for these developments, but I doubt it.

In the past, I had proposed creating a “BlendMerge” program by applying a general solution for version control of data in “directed acyclic graph” form (which a Blender file can be analyzed as).

However, there may be a faster approach, which is to explode a Blender file into components and treat them as individual files to version. This would probably allow us to reuse more existing code, and yet solve 80-90% of the conflicts that would realistically arise in handling Blender files through a “merge-based” version control model.

Come 2023, or after we release an episode or two — whichever comes first — I’ll probably revisit these ideas and evaluate whether it’s time to tackle them.

Getting Back to Production

If everything goes even remotely to plan, I’ll be back in production in March or April, finishing up the character animation in episode 1.

2MT: 2-Level Editing in Kdenlive (for 22-2-22)

It’s been over 7 years since my last “2-Minute 2-Torial”, so it seems only right to bring it back for “Twos Day” — Tuesday, 22-2-22 (ISO – or 2/22/22 or 22/2/22, if you prefer your dates American or European, respectively).

In brief, the topic is a quick demonstration of a way I like to use the ability to embed Kdenlive project files as clips in other Kdenlive project files:

Transcript

Did you know that you can use a Kdenlive edit as a clip in another Kdenlive edit?

This is very useful in animation workflow, because we often want to use stand-in versions of shots, and then replace them, later.

In this shot, for example, I simply used a drawing and panned across it in Blender

to create a quick “storyboard animatic”.

I also wasn’t sure exactly how long it should be. I can adjust that with the speed

setting in Kdenlive.

Later, I went through several versions of the shot, as more and more elements were

animated.

These aren’t just different shots. In Kdenlive, they are different kinds of shots:

The storyboard animatic may use the “Change Speed” tool to adjust the timing, or

the “Transform” effect to pan and zoom the image, working with just a few still

images as the source.

The Open GL animatic previews are usually AVI video files, rendered directly from

Blender.

And the final renders are usually a series of frames rendered to a lossless

format like PNG images, for maximum fidelity. This is called a “PNG stream”, and is

the usual format for our final animation output.

Each of these is stored differently, and you can’t simply reload the clip in the

Kdenlive project bin with the new versions!

But you can update them easily in a separate Kdenlive file.

Then you use that Kdenlive file AS the clip in the scene you are editing.

With proxy-workflow (which I’ll explain in another video), this is just as fast as

rendering the shot and including that, but there will be no loss of quality when

the project is rendered.

It also makes it easy to use special effects, like limited framerates, as I used

for this “timelapse” shot. Only the shot file needs to know that it was animated

“on threes”.

The final edit file only sees video, through all of these steps, and it updates

automatically from the linked Kdenlive project!

Bringing Back the 2-Minute 2-Torials

Clearly, I’ve decided to bring these back as part of my new strategy for 2022. I’d like to start producing one-per-month, if I can, to be released on the 2nd Tuesday, following the theme (yes, I know this isn’t the 2nd Tuesday — this is a special case!).

This is also, I think, my first Kdenlive tutorial in the series.

SELECTED COMMENTS:

The video is already public on both Vimeo and YouTube, now, BTW. I wanted to get it out on 22-2-22.

Winnowing WordPress and Getting Git

Initially, I was pretty upset that the old WordPress theme couldn’t just be ported over to the YunoHost WordPress, but I selected a “good enough” theme, and started customizing it. I’m now getting to the point where I’m fairly satisfied with it, and I think perhaps I will just stick with WordPress as the top-level CMS on the project site. This theme is “Grid Next”, with changes mainly to font and color to make it more consistent with the old site, using the “Additional CSS” customization feature.

Technically, this is still just a dry run. I’ll be repeating all of this on the new project server, which I intend to rebuild from a fresh install after I’m finished with this experimentation phase.

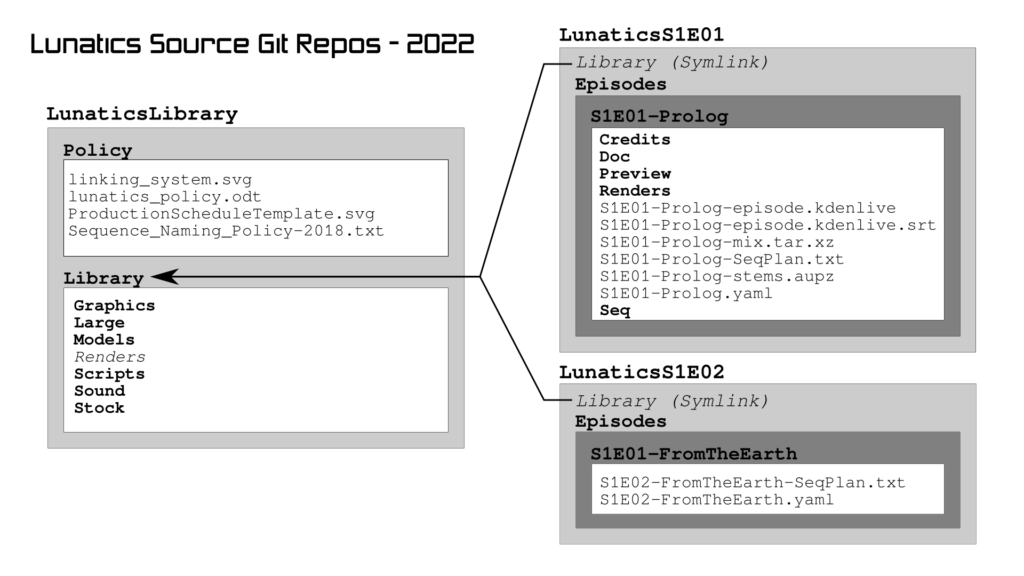

Git

I am also attempting to convert my Subversion repo into multiple Git repos.

This is pretty challenging, and in fact, I found five different options for what I could do with the transition, before settling on this solution. My original notion was that I would just name the repos after the directories I was pulling out of the Subversion repo, but this would give them very generic names! Or else I would have to revise all of the relative links in all the Blender files!

Instead, I think I will have multiple repos, with symlinks connecting to the Library:

This way, all the contributor has to do is to check out two repos: the “LunaticsLibrary” repo and the episode repo they are working on (e.g. “LunaticsS1E01”). They must be adjacent to each other in a working directory.

But the relative symlink stored in the episode source code will point out of the Episode repo and into the Library repo — recovering the relationship they have now, in the combined Subversion repo.

In addition, I will need to make sure to create branch releases for the library, so that the contributors knows which version of the library to use with the episode they are working on.

Other Methods

For completeness sake, the four other methods that I found for handling this situation were:

Multiple Repos in One Directory

It’s evidently possible to specify the git repo directory in a working copy, so that each uses a different directory instead of “.git”, such as “.git_lib”, “.git_S1E01”. The problem unfortunately is that the potential contributor would have to know how to use the alternate directory on their git commands, and it’d be easy to accidentally add files to the wrong repo by accident.

Since my typical contributor won’t be particularly savvy with Git, this seems like a bad idea.

Single Repo, with Subtree

I don’t fully understand this one, but apparently it is sometimes used for vendorizing subpackages required for a project.

Single Repo, with Branches

Conceivably, I could use a single repository, with Git “branches” for each episode. This sort of makes sense. I will probably need to create branches for the library repo anyway, to make sure that the expected version of the library files is available to each episode. But this does seem less transparent and intuitive than using separate repos, and potentially could result in a very large repository.

Multiple Repos, Update Links in Blender

Rather than using symlinks to associate the Library directory from the “LunaticsLibrary” repo, I could just refer to the files directly, including the repository name. The downside of this is that it would require me to update a lot of Blender files, and of course, it wouldn’t work for previous versions of the files.

Converting to Git

The process for converting the Subversion repo to Git repos is relatively simple, but painfully slow. After looking at methods using git-svn directly, I decided to use the Ruby svn2git utility, which makes this simple.

I first had to define a “users” mapping that converts the string IDs used by Subversion into the name and email format used by Git.

Then, I created the “LunaticsLibrary” directory, moved into it, and ran the following to extract only the Library files into this Git repo:

svn2git https://svn.lunatics.tv/lunatics \ --authors ../lunatics/users.txt \ --exclude Episodes

The excludes expression eliminates all the episode directories.

Then, to get the first episode sources, I had to use a more elaborate set of excludes:

svn2git https://svn.lunatics.tv/lunatics \ --authors ../lunatics/users.txt \ --exclude Library --exclude Policy \ --exclude Episodes/S1E0[02-9]-.* \ --exclude Render \ --exclude Episodes/TeaserTrailer \ --exclude Episodes/Trailers \ --exclude Episodes/Unscheduled

So far, so good! But in fact, it takes hours to execute the Library checkout! So the whole process is going to take a couple of days. Which is part of why I was also spending time on customizing WordPress.

Of course, this is just getting from SVN to Git, and breaking the source tree up into sections. I will then have to convert to the Git-LFS system, as well as cloning the projects into Gitea.

SELECTED COMMENTS:

The “usual” git way to do this is with submodules, where the “library” repository is shared by all the individual episode repositories. Then you can have the specific commit of the library repo that you need for each episode and it can all be managed by git.

Timesheet / Projects / Output

TIME BREAKDOWN

Production:

2-Minute 2-Torial 7.3 hrs

VTuber Workflow Leads 0.2

Ancillary Products Leads 0.4

——

7.9

IT/Virtual Studio Site:

YunoHost Droplet Install 1.1

YunoHost Apps Evaluation 29.4

Updating Old WP Social Bar 1.0

Static Workflow Research 0.2

Wordpress/Git Migr. Tests 21.5

——

53.2

Writing/Documentation:

Annual Report Pre-Press 5.8

January Summary Post 3.9

Screencapping YNH Apps 6.2

YNH Experiment Drafts 1.9

YNH Review for Patreon 7.1

Virtual Studio Timeline 6.3

Wordpress/Git Post 1.1

——

32.3

Marketing/Networking/Research:

Patreon Creator Survey 1.4

FLOSS Philosophy Updates 1.4

I Love FLOSS Day Networking 1.4

——

4.2

========

TOTAL ACTIVE SCREENTIME: 97.6 hrs

WRITING (WORDCOUNTS):

January Summary Post 4079

Cleaning House for 2022 (draft) 1029

February Summary 5763

———

10,871 words

PRODUCTION (RUNTIME):

2-Leveling Editing Kdenlive 2:00

———

2:00

Additional Images from February

I took a lot of screencaps this month, while exploring the available YunoHost apps.

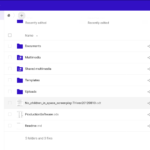

Gitea

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

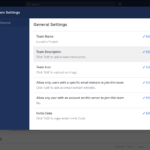

WordPress

Since I knew what to expect from WordPress, I didn’t take as many screencaps. At this point, I wasn’t sure if I was going to stick with WordPress or switch to something else for the Production Log.

|

|

PeerTube

|

|

|

|

|

|

Mastodon

I took a lot of images of the administration interfaces for Mastodon after I had determined I wasn’t going to use it — for future reference in case I want to change my mind about the federated social media server.

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

Pleroma

|

|

|

|

|

|

|

|

Misskey

|

|

|

|

|

|

|

Other Federated Social Media

Nextcloud, Collabora, OnlyOffice

|

|

|

|

|

|

|

|

|

|

|

|

|

|

Omeka-S

|

|

|

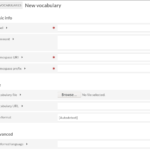

Markdown Editors & Wiki

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

Graphics & WhiteBoard

|

|

Vikunja

|

|

|

|

|

|

|

|

Other Management & Groupware

|

|

|

|

|

|

|

|

Realtime Communications

Friendlier Front-Ends: Invidious, Bibliogram, Alltube

|

|

|

|

|

|

|

|

|

|

|

|

ArchiveBox Tests

ArchiveBox is a little like having your own little Archive.org (except that it also provides a method to trigger the real Archive.org to snapshot sites for you). It collects a variety of means for preserving HTML, with varying degrees of success. I made several tests to see how well it might work for us.

|

|

|

|

|

|

|

|

|

|

|

Miscellaneous Applications

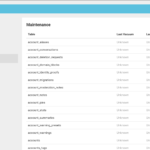

Statistics & Administration (Monitorix, NetData, Zabbix, Webmin, etc)

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|